OpenWGA 7.6 - OpenWGA Concepts and Features

AdministrationClustering

OpenWGA is capable of being in a cluster with multiple OpenWGA server nodes acting like a single server and all accessing the same databases.

This is recommended for all OpenWGA installations where downtime is not acceptable. As all hardware will fail at some time and some operations on a running system need a restart of the server - like upgrading the OpenWGA version - having a cluster is the only way to ensure continuous service.

Having a cluster is also a way to dynamically react to increased traffic by adding more nodes and therefor being able to process more requests.

Additionally the OpenWGA Enterprise Edition provides some additional value for clusters by adding a cluster communication framework, which adds the following features to the cluster:

- Node auto detection in LAN

- Distributing administrative data like blocked logins

- Distribute commands to cluster nodes via OpenWGA admin client

- Cluster status monitoring via OpenWGA admin client

Configuring a cluster

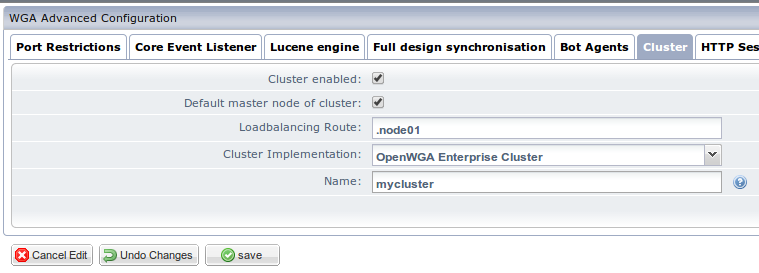

You configure individual OpenWGA server nodes for cluster usage in the OpenWGA cluster configuration. You find it in OpenWGA admin client in "expert mode" (checkbox on the top right), in menu "Configuration" > Submenu "Advanced Configuration" > Tab "Cluster":

The settings explained:

- Cluster enabled: This simply tells the current OpenWGA server that it is part of a cluster

- Default master node of cluster: This should only be enabled on one server node of the cluster. It marks the server that by default is the master node and should run "single node operations" that should not run on multiple nodes

- Loadbalancing route: A string that can be used to identify the route for a load balancer to this server. Should be different for every node. OpenWGA will add this string as a session cookie named "WGLBROUTE" to each browser client that does its first request on this node. A load balancer can then use this cookie to always direct this client to this node, enforcing "sticky sessions" (a client will always use the same node for one session). Note: In this example the route starts with a dot "." because the Apache Load Balancer module will ignore the route cookie if it does not start with a dot.

- Cluster implementation: Chooses a backend for internal communication in the cluster, used for sharing information (like blocked logins) and transport commands between the nodes. If you use the OpenWGA Enterprise Edition you can choose the OpenWGA Enterprise Cluster here. Otherwise there is only "Single node (cluster)" enabled which simulates a single node cluster and does no real communication to other nodes.

- Name: A name for the cluster. Must be the same on all nodes .Nodes on a LAN may find the cluster and its members automatically based on this name.

The OpenWGA Enterprise Cluster is regarded a "beta feature" in OpenWGA Version 6.3, meaning it is feature complete, but there may still be unknown problems with the implementation yet to flesh out in live usage.

If you are building an OpenWGA enterprise cluster in your LAN you may be finished wit these settings. OpenWGA will automatically find cluster nodes in a LAN that use the same cluster name.

However if you are building a cluster in a WAN like the internet, you may need some additional settings to make it secure. You can find these additional settings by clicking "show/hide more options" on the bottom right of the configuration editor:

- Add setting encrypt socket to encrypt the cluster communication for usage on unsafe network routes

- Add setting members to statically determine the IPs/DNS names of server nodes that are part of the cluster

- Add setting password to determine a credential for cluster group access, preventing rogue nodes from joining

- Add setting interface to determine a network interface on which cluster communication should be done

Using OpenWGA enterprise session manager

One problem to solve for an application cluster is to replicate user session data across all nodes .This ensures that a user may switch from one node to the other and still has his session status ready.

This task can be provided externally of OpenWGA, like for example via Tomcat session clustering. OpenWGA enterprise edition however has its own implementation for session replication, the OpenWGA Enterprise HTTP Cluster Session Manager. It has the following features:

- Seamless transport of all session data to the nodes online in OpenWGA enterprise cluster, using its communication framework including all configured settings, like for example encryption

- Secure session mode: The session manager uses different session ids and sessions for insecure and secure HTTP communication. When going from insecure to secure the existing session data is copied into a new session which can only be accessed via secure connections. This protects the session in secure mode from being modified by attackers as its id is never transmitted insecurely.

- Avoidance of session id URL suffixes: Using a custom session manager for OpenWGA will prevent the ";jsessionid=" URL suffix sometimes added to URLs. While this is intended to transport the session id to clients that do not support cookies it is a quite unpopular feature with publishers and SEOs alike, so it is avoided here.

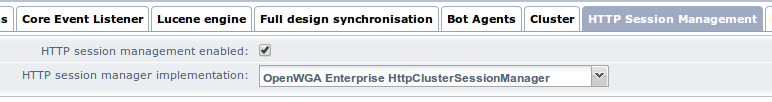

You find its configuration in OpenWGA admin client in "expert mode" (checkbox on the top right), in menu "Configuration" > Submenu "Advanced Configuration" > Tab "HTTP Session Management":

There check "HTTP session management enabled" and select "OpenWGA Enterprise HttpClusterSessionManager".

The most noticeable change will be that clients suddenly use a cookie named "WGSESSIONID" (or "WGSECURESESSIONID" on secure connections) instead of JSESSIONID. Otherwise the OpenWGA enterprise session manager should perform its work quitely in the background.

The OpenWGA Enterprise session manager is regarded a "beta feature" in OpenWGA Version 6.3, meaning it is feature complete, but there may still be unknown problems with the implementation yet to flesh out in live usage.

Administrative functions

With a OpenWGA enterprise cluster enabled there are some added functionalities available in OpenWGA admin client for cluster management.

Cluster overview

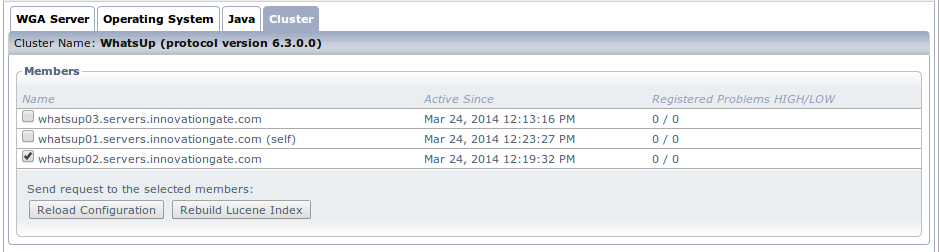

On menu "Runtime > Status" there is a new tab "Cluster":

It gives an overview of the currently active cluster nodes, plus the ability to start some server-wide processes for its nodes:

- Reloading the nodes configuration

- Rebuilding the nodes lucene index

Web app configuration cluster overiew

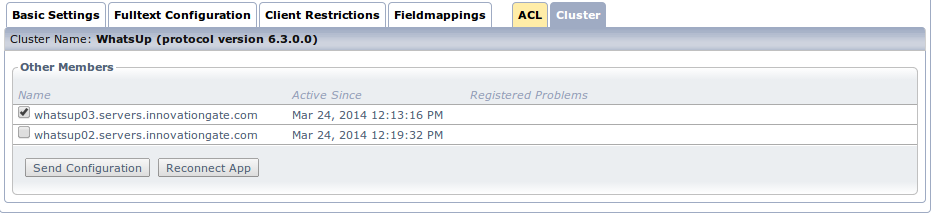

On a web app configuration there is also a new tab "Cluster":

Here the administrator can start tasks on other nodes regarding the current app:

- Send the configuration of this app to other nodes

- Reconnect the app on other nodes